Data Vault

See all projects | About this work | Jisc Research Data Spring

Summary

From the project website:

- DataVault aims to “to define and develop a Data Vault software system that will allow data creators to describe and store their data safely in one of the growing number of options for archival storage”.

From the Project Plan:

- The project’s 'Problem Statement' reads: “As part of typical suites of Research Data Management services, researchers are provided with large allocations of ‘active data store’. This is often stored on expensive and fast disks to enable efficient transfer and working with large amounts of data. However, over time this active data store fills up, and researchers need a facility to move older but valuable data to cheaper storage for long term care. In addition, research funders are increasingly requiring data to be stored in forms that allow it to be described and retrieved in the future. The Data Vault concept will fulfil these requirements for the rest of the data that isn’t publicly shared via an open data repository.”

From Spotlight Data: Jisc RDS Software Projects:

- "The project will allow researchers to safely archive their research data from to predefined storage locations that include cloud and local storage (e.g. Arkivum, tape backup or AWS Glacier). It is designed to bridge the gap between the variety of storage options and the end user, while capturing metadata to allow it’s the search and re-use of the data. The system has two components: a Data Vault broker which transfers the data from local storage to archive and includes policy, integrity and security. The second is the Data Vault user interface which passes messages to the broker to start archival or retrieval tasks. Data is passed via a REST API.

- Key project outputs:

- DataVault software available on GitHub as open source

- DataVault demonstrators

- Phase 1: Working system - single user to vault data

- Phase 2: Additional features including (users, administration dashboard, extra filestore connectors (SFTP, Amazon Glacier, DropBox), user and group management)

- Storage, workflows, metadata and system requirements assessed and documented"

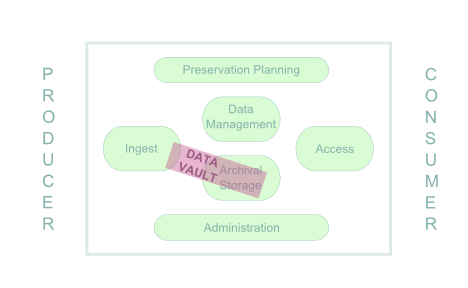

From a synthesis of the projects in the context of the OAIS model, by Jen Mitcham of the Filling the Digital Preservation Gap project:

- “The “DataVault” project at the Universities of Edinburgh and Manchester is primarily addressing the Archival Storage entity of the OAIS model. … The DataVault whilst primarily being a storage facility will also carry out other digital preservation functionality. Data will be packaged using the BagIt specification, an initial stab at file identification will be carried out using Apache Tika and fixity checks will be run periodically to monitor the file store and ensure files remain unchanged. The project team have highlighted the fact that file identification is problematic in the sphere of research data as you work with so many data types across disciplines. This is certainly a concern that the “Filling the Digital Preservation Gap” project has shared.”

Further information

Hyperlinks to further information on the project

Potential to enhance

Is there potential to leverage non-preservation focused developments to enhance preservation capabilities?

As it stands, data sent to Data Vault will not be checksummed until after it has been copied across at least one network connection, which significantly reduces trust in the completeness and accuracy of the data. It would be useful to exploit checksums where they are available, for example where the source is data in Dropbox. If the source is a network drive this will obviously not be possible. In this case it perhaps becomes a more general data management issue with relevance for the working practices of researchers. Clearly, the best time to create checksums is at the point of data creation. In lieu of this challenge, which clearly goes beyond the remit of Data Vault, it may be useful to consider a completeness check based on the number of files and the volume of data. This may already be provided by the technologies employed by Data Vault, but this would be useful to verify. See also a related discussion in the Consortial Approach... project.

Collaboration

Is there potential for collaboration and/or exploiting existing/parallel work beyond the project consortiums?

Support for file format identification using Apache Tika will be explored. Filling the Preservation Gap project has noted the poor support for research data formats in existing solutions, and intends to address this challenge in phase 3. The lack of a unified and community owned/editable source of file format magic remains a challenge within the preservation community. Collaboration with Filling the Preservation Gap on a single approach would therefore clearly be beneficial. It may be useful to consider a Pronom backed identification application such as Droid, Fido or Siegfried, and the addition of a facility for contributing files that are unable to be identified.

The standard archival packages (SIP, AIP, DIP) under development by the EC funded E-ARK Project (which are also based on Bagit) might provide a means of comparison and validation of the Data Vault package design, and open possibilities for further collaboration.

The Data Vault workshop (Manchester, October 2015) included a presentation from the ResearchObject.org project, which highlighted issues around metadata and in particular documentation and Representation Information, and noted the potential for collaboration. Clearly links are already strong with this Manchester based project, but awareness of this work could be stronger within the wider preservation community.

There is clearly potential for further collaboration/joinup with other storage technologies, and these have been identified and considered early on by the project as detailed here.

Considerations going forward

What are the key considerations (with regard to preservation) for taking forward the work beyond the current phase?

Although the project participants have worked hard to identify requirements and use cases It’s not completely clear exactly how Data Vault will be used, by who and in what situations. A working prototype and trials will help to develop some of this understanding and allow the scope of what the system supports to be pinned down. There are conflicting requirements. It needs to be simple and quick to use to get data creators to use it, but it needs to support some level of preservation. Does this at all go beyond just keeping the bits of the data in question? Should support be provided for documentation and Representation Information or is it assumed that this could be included with data placed into a Vault? How will changing data and consequently safe disposal be addressed in practice?

There is a danger of scope creep where Data Vault becomes another complex repository application rather than a storage broker meeting a specific and straightforward need. Discussion at the Data Vault workshop around the complexities for supporting access rights highlighted how slippery this slope is. The scope of any third phase should be carefully monitored.

Uptake and sustainability

What steps should be taken to ensure effective uptake and sustainability of the work within the digital preservation community?

The journey from Data Vault to a long term preservation store is perhaps a little unclear and will need to be explored in trials of the prototype (and more realistically and challengingly when deposits have grown over time). The choice of Bagit for packaging deposits, and the various open technologies chosen, provides a solid foundation for Data Vault that should ease concerns about the exit strategy, a critical consideration for any system with a long term preservation focus. Note the ongoing uptake of the Bagit standard demonstrated by another recently released preservation tool with some parallels to this work: Exactly.

Sustainability of any grant funded open source software is always challenging. Discussions at the Data Vault workshop noted the benefit of keeping the project scope tight and delivering a solid working application before looking to additional requirements. This appears to be the most crucial issue at this stage of the work. If a dependable working solution can evolve at Manchester and Edinburgh, further expansion can then be considered as a genuine open source project and/or as part of the proposed Jisc Research Data Service.

An entry for Data Vault was added to the added to COPTR registry.

Project website sustainability checklist

A brief checklist ensuring the project work can be understood and reused by others in the future.

| Task | Score |

|---|---|

| Clear project summary on one page, hyperlink heavy | 1 |

| Project start/end dates | 1 |

| Clear licensing details for reuse | 1 |

| Clear contact details | 0 |

| Source code online and referenced from website | 2 |

2=present, 1=partial, 0=missing

Key Recommendations

- Challenge: risk of data corruption without integrity checking

- Potential to exploit checksums from third party services

- Potential to explore completeness check based on number of files and total data size

- Consideration of broader working practices of researchers and the use of checksums at the point of data creation

- Potential to collaborate on file format identification with Filling the Digital Preservation Gap and/or the National Archives, alongside consideration of tools

- Opportunity to validate package design, and perhaps collaborate further on interoperability with E-ARK Project

- Potential for collaboration with other storage technologies/initiatives

- Challenge: scope creep. Suggest maintain tight focus on delivering working system to inform further requirements via operational trials, and provide solid foundation to take forward Data Vault once grant funding ends.

- Suggest adding key contact, licensing and other project details to the project website "About" page, and summarise most useful outputs on project closure.